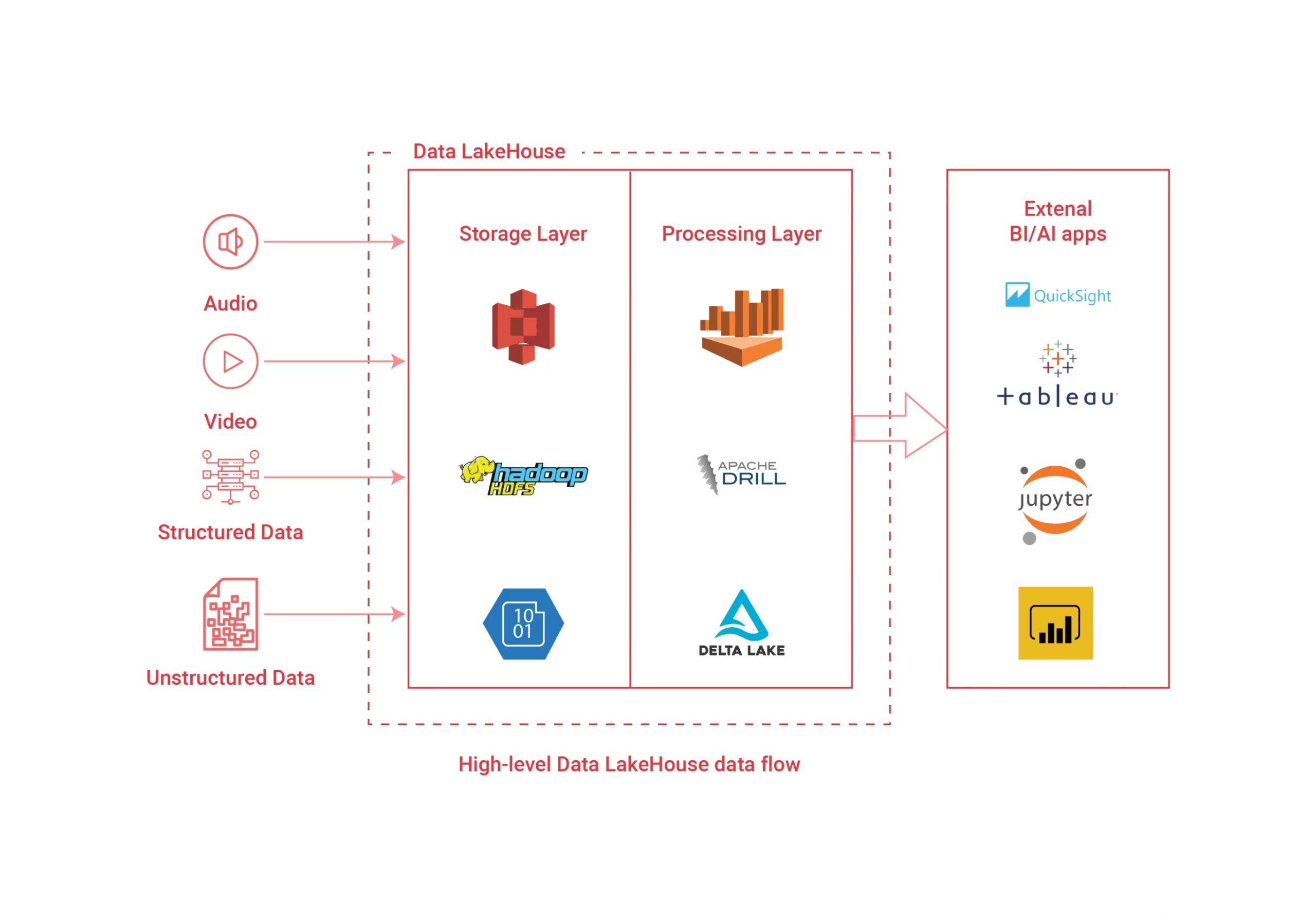

Modern data platforms require a separate storage and processing layer to work efficiently. A data lakehouse is a solution that combines a data warehouse structure (typical in most original legacy tech systems) with the more advanced and convenient features of the data lake.

Data lakehouses enable the same schema and structure as those in your data warehouse, and they apply that structure to unstructured data, like what you’d find in a data lake. Data lakehouses allow users to find and access information more quickly, so your team can begin putting that stored data to work.

Building a Data Platform

Once done out of convenience, building a data platform within your business is now a necessity. Improving your customer experience based on data-given actionable insights will increase revenue and define your brand. However, it can be difficult for companies to pinpoint the right ways to define their data platform.

The technology industry hasn’t exactly developed a blueprint for IT teams to follow, and data layers will look different for every company, typically based on the industry and type of company in question. In this article, we’ll talk about how you can lay the foundation for a modern data platform and utilize that data lakehouse.

Understanding a Data Platform

Think of your data platform as the central nervous system of your company data. Your platform should handle the collection, cleansing, transformation, and application of all data in storage and use it to generate insights. Many companies are data-first and have embraced housing data as an incredibly effective way to scale data.

Gone are the days when companies treated data as a means to an end, final product, or outcome. Instead, data has become more like a type of software. Most companies dedicate entire teams and plenty of time to maintaining and optimizing their data and, in doing so, can achieve accurate data-driven results.

ETL/ELT data pipelines should be layered, which can bring in a certain level of confusion for teams that might be unfamiliar with the data lakehouse or a modern data platform.

How to Build a Modern Data Platform

You cannot build your data platform without a foundation, and each of the platform layers mentioned will assist you in establishing your data lakehouse from the hypothetical ground up. It can be challenging to know where to start, but every business has the same core layers regarding a modern data platform, and they are as follows.

Storage and Processing

You cannot physically have data if you don’t have a place to store and process that data. Not many companies transform and analyze their data when it becomes available, so storage is an absolute necessity. As your company grows, you’ll likely begin to deal with large amounts of data that will become overwhelming if it doesn’t have anywhere to reside in the meantime.

Businesses of all sizes are moving their data to the cloud. The emergence of data storage native to the cloud is everywhere. From data lakes to lakehouses, it’s challenging to come by a company that doesn’t store at least a partial amount of their company data in the cloud.

The cloud offers affordable and accessible storage options for on-premise solutions. The type of storage you’ll choose is entirely related to your business needs, but we’re laying the basis for an effective data lakehouse. Regardless of your direction, you cannot build modern data without the cloud.

Data Delivery

Every modern data platform needs an efficient way to deliver data from one system to another, known as data ingestion. As the amount of data builds, infrastructures tend to become incredibly complex, and many teams are left dealing with mass amounts of structured and unstructured data from various sources.

There are plenty of tools available today to assist internal tech teams in ingesting data. However, there’s no shortage of data teams that build custom tools with code to deliver data from internal and external sources. Artificial intelligence workflow automation is an essential component of the data delivery layer.

Data Transformation

Original data must be cleaned up and readied for analysis and reporting. This cleaning process is called data transformation, and you have to do it to build a modern data platform such as a data lakehouse.

Once you’ve transformed your data, you can move to the modeling stage, which creates a visual representation of your data within the lakehouse. Changing the data makes it understandable, while modeling makes it comprehensive visually. When the graphic layer is complete, you can ready your data for the ever-important analytics phase.

Analytics

There is no point in collecting data if your business can’t effectively use it, which is where analytics come into play. Your data doesn’t have meaning without analytics, and internal statistics are crucial to the data puzzle.

There are plenty of effective analytics software choices available today, and your data or development teams can help you choose the right one for you. The proper analytics layer for your data stack is vital to how you interpret your data, so select your software with care.

Observable Data

Because modern data is so complex, there has to be a certain level of observability for your data team to determine whether the information presented is trustworthy. Your organization does not have the time to deal with partial or incorrect data.

Through effective data observability, your teams can fully comprehend the health of your data. You’ll apply what you’ve learned from your experience with Development Operations to your data pipeline, focusing on usable and actionable data.

Check your data for freshness, proper formats, completeness, schema, and lineage. The right observability software will connect seamlessly to your data platform. This level concerns security, compliance, and scaling mass amounts of observable data.

The Discovery Level

Finally, you need real-time access to your data, and data catalogs and warehouses no longer cut it. Consistent access to reliable data is necessary to running a successful business in any industry, period. Data discovery picks up the slack where lack of support for unstructured data falls short.

The presence of data discovery offers a real-time glance into the health of your data and supports data warehouse and lake optimization. Data discovery will authorize your team to trust that their assumptions regarding your data match the reality of what that data presents.

Utilizing the Data Lakehouse

Each of the steps mentioned above will lead you toward a data lakehouse architecture. The data warehouse paradigm enables data storage in an organized hierarchical structure. The data lakehouse is a piece of that structure that can transform unstructured data into something you can use to establish your business and better your brand.

The level of business intelligence that runs the data portion of your company is an imperative component of your success. It would help to leverage your data software and services into actionable insights every moment your data team is on the job.

There has never been a more crucial time to put the best (and most modern) practices into place to ensure that you’re making reliable data-driven decisions every day. Instant and organized access to your data is crucial for you and those on your team who benefit from that access. Consider building that modern data platform, beginning today.